We can provide models at four detail levels optimized for your different use cases, which we discuss in more detail later. You can also use it directly on your folder of images to try building a model for yourself before writing any code.įinally, you can preview the USDZ output models right on your Mac. We also provide HelloPhotogrammetry, a sample command-line app to help you get started. The API is supported on recent Intel-based Macs, but will run fastest on all the newest Apple silicon Macs since we can utilize the Apple Neural Engine to speed up our computer vision algorithms. Once you have captured a folder of images, you need to copy them to your Mac where you can use the Object Capture API to turn them into a 3D model in just minutes. If you capture on iPhone or iPad, we can use stereo depth data from supported devices to allow the recovery of the actual object size, as well as the gravity vector so your model is automatically created right-side up. We will provide best practices for capture later in the session. You just need to make sure you get clear photos from all angles around the object. Images can be taken on your iPhone or iPad, DSLR, or even a drone. Now let's look at each of these steps in slightly more detail.įirst, you capture photos of your object from all sides. The output model includes both a geometric mesh as well as various material maps, and is ready to be dropped right into your app or viewed in AR Quick Look. Using a computer vision technique called "photogrammetry", the stack of 2D images is turned into a 3D model in just minutes. Next, you copy the images to a Mac which supports the new Object Capture API.

Normally, you'd need to hire a professional artist for many hours to model the shape and texture.īut, wait, it took you only minutes to bake in your own oven! With Object Capture, you start by taking photos of your object from every angle. Looks delicious, right? Suppose we want to capture the pizza in the foreground as a 3D model.

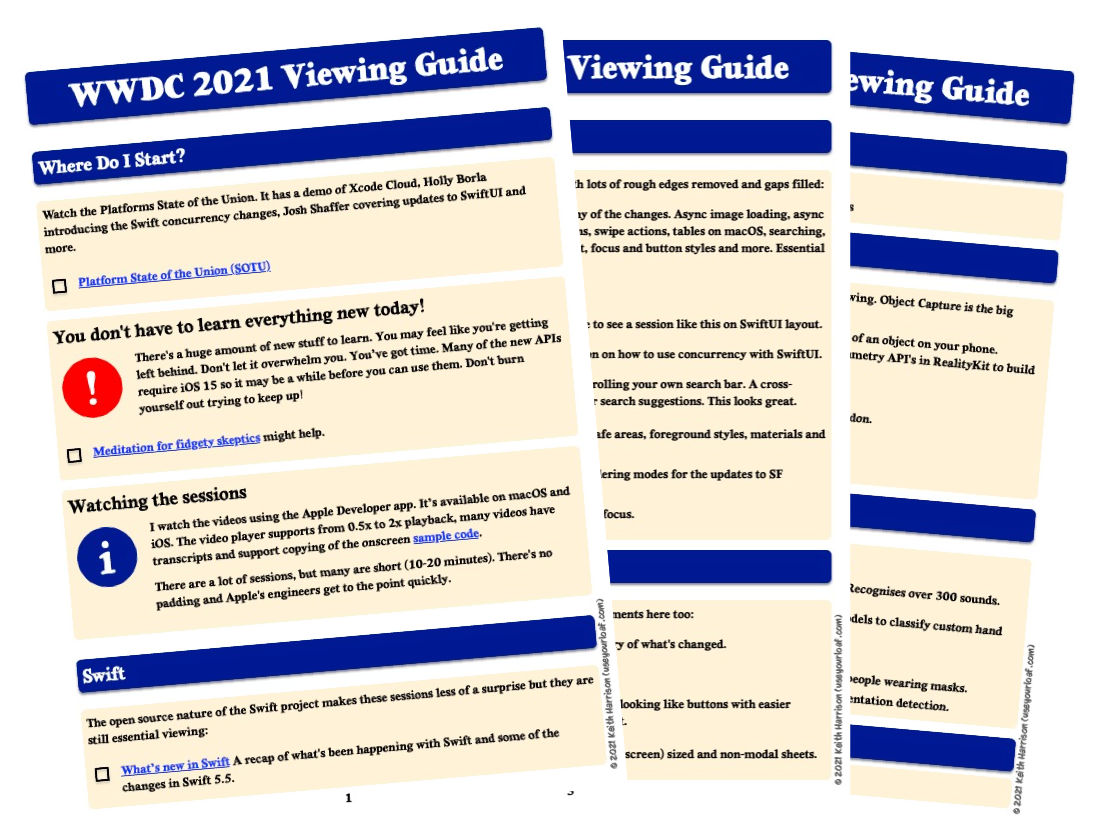

Let's say you have some freshly baked pizza in front of you on the kitchen table. You may have also used Reality Composer and Reality Converter to produce 3D models for AR.Īnd now, with the Object Capture API, you can easily turn images of real-world objects into detailed 3D models. You may already be familiar with creating augmented reality apps using our ARKit and RealityKit frameworks. Today, my colleague Dave McKinnon and I will be showing you how to turn real-world objects into 3D models using our new photogrammetry API on macOS. You'll want to tune in, in other words, as there's bound to a few twists and surprises.♪ Bass music playing ♪ ♪ Michael Patrick Johnson: Hi! My name is Michael Patrick Johnson, and I am an engineer on the Object Capture team. And this isn't including the occasional wildcard on Apple's part. However, Apple is also rumored to be introducing new 14- and 16-inch MacBook Pro models, complete with new silicon (M1X? M2?) to power them. You can expect big news on software releases like iOS 15, iPadOS 15, watchOS 8, tvOS 15 and the next macOS release. This may be one of Apple's more packed WWDC events in recent memory. And be sure to check out Engadget's YouTube channel after the fact - we'll have a post-event stream to offer insightful (and hopefully witty) commentary. You can watch the developer conference opener here through Apple's YouTube channel (below), but you can also catch it on Apple's website through most browsers as well as the Apple TV app on a wide range of devices. Accordingly, it's giving you plenty of ways to watch the event when it starts at 1PM Eastern. Apple is about to kick off WWDC 2021 with its customary keynote, and expectations are riding high this year.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed